Blog

AI for Producers: Your Q1 Directory of Intelligent Innovations in Music Tech

17 Jan '2024

Stay up to date with the latest artificial intelligence technology aimed at making music: from sound design to mixing and distribution

Every industry seems to be entering a monumental age as we see serious artificial intelligence tools going mainstream. For music producers working in a creative field, AI has reshaped and altered how many of us go about our day-to-day work. Here on the Loopcloud Blog, we’ve recently taken a look at Where We Are with AI and What’s Next, and in this article, we’ll bring together the essential AI releases for musicians.

Last year, we also made a list of 10 AI Sites Musicians Need to Know About and 10 AI Plugins Producers Need to Try Today, but a lot has changed even in that small span of time. As technology continues to evolve at a more than rapid rate, it can be a struggle to keep up with, and demands constant updating if we’re to remain in the loop of the latest and greatest tools available to us.

With this article, we aim to provide a regularly updated hub containing all of the most-useful, recent innovations in AI tech within the music industry. So, if you’re looking for ways to enhance your music production workflow and simplify your lifestyle through the advantages of AI, you’ve come to the right place.

Essential AI releases for Musicians

Google’s Dream Track and AI Music Tools raise the bar for workflow efficiency

YouTube recently announced their new, upcoming AI technologies, Dream Track and AI Music Tools, in partnership with Google DeepMind. Dream Track, specifically, is a tool designed to assist creators of YouTube Shorts. They’ve partnered with nine artists who’ve agreed to allow their voices to be synthesised by AI, so that creators can combine a custom text prompt with their chosen singer’s voice to generate copyright-free music to complement their YouTube Shorts. Getting a fully-custom vocal take from Sia for your latest quick vid is very nearly a reality.

AI Music Tools, meanwhile, is still in its developmental stage, but from the sneak peak Google have provided us with, it already seems like we’re on track to receive a whole array of AI-assisted tools that will further enhance our creative options as musicians and audio enthusiasts. Take a look at the trailer yourself to see the AI turn an uploaded vocal melody into a polished, organic-sounding saxophone sample.

Riffusion allows for high-grade AI-generated song snippets

A prime example of just what AI sound generation is capable of and where it might be heading in the future, Riffusion generates vocal phrases with full backing tracks based on the lyrics you prompt it with. Simply input your desired lyrics, choose the musical style from five presets, and you’ll be given your short sample, or riff, to use creatively at your own leisure. Riffusion bears some similarities to Google’s MusicLM, which you’ll find further down this list.

The most astounding thing about this technology is how fast you can generate an infinite stream of riffs that all sound like they’ve come from a professional recording studio. The best part is, It’s completely free to access for anyone and everyone! Although this type of sound generation might not find a staple place in every creative’s workflow, that doesn’t take away from its innovative marvel and the potential evolutions it might give birth to.

iZotope Neutron and Ozone continue to pioneer AI for mixing and mastering

iZotope have been a leading titan in the music production industry for years, and they were one of the first to take AI seriously. Their Neutron and Ozone mixing and mastering suites (VST/AU) have been on-sale for years and have seen intelligent ‘Assistant’ features added to help analyse the audio material running through them and suggest initial settings for their high-quality modules.

Google MusicLM allows for full AI-generated audio

Google’s MusicLM is an example of an AI that pretty much takes full control. All the user has to do is feed it a text prompt, and it will generate a sound file based on the input. You could then resample this audio as you wish, or combine it with more MusicLM-generated files. Just remember, that true creativity comes from your ability to harness these new technologies in innovative ways, and try to be open-minded about their potential applications.

FADR allows for separate drum stem extraction

There are plenty of AI stem extractors on the market now – each utilising their own algorithm, attempting to target different types of track stems for optimum separation. For example, one of the innovators in this field, LALAL.ai, has released and updated numerous algorithms since its inception, allowing the user to extract specific instrumental stems, such as piano, electric guitar, bass guitar, etc.

Then, along came FADR. This stem extractor stands out as specifically useful due to its ability to separate each drum type (kick, snare, and other) from the entire kit – something that competitors are still yet to incorporate. As you can imagine, having control over individual drum stems when mixing allows for much more fluid creative control, compared to having to mix your drum kit as an inseparable whole. You can even try running your track through different stem extractors to find which algorithm will give you the best stem of each instrument type.

Synplant 2 transforms samples into synth patches using AI

Synplant is an AI-infused synth built on the wondrous ‘Genopatch’ technology. So, This uses AI to generate a range of synth patches based on the sonic characteristics of an uploaded sample. You can import any whacky sample of your choosing and listen as the AI creates a few branches for variations. Check out the video below at 20:43 for a more in-depth demonstration.

The biggest advantage of the Genopatch workflow is that, once the sample has been converted into a synth patch within Synplant, you can modify it in a similar manner to any other synth, giving you access to internal FX, LFO, and enveloping. You can either pick a patch generation similar to the original sample, or one that the AI has completely skewed and morphed into something new. The choice is yours, and the possibilities are nearly endless.

Krisp.ai

Krisp.ai is an AI extension to many of the most used online video/voice chat programs. It’s essentially an AI meeting assistant with a whole host of audio-cleaning and analysis features, such as transcribing speech from members of a call live and removing unwanted background noise from keyboard clicking, to noisy ceiling fans. There’s numerous different settings which can target commonplace background noises found within online video calls.

Krisp.ai is truly jam-packed with some of the best noise-cleaning features to date, and although this neat addition to the list doesn’t directly correlate to music production, who knows what the potential offsprings are that this tech could bring to our industry?

sonible smart:deess

To quote Sonible’s Smart:Deess trailer directly, this plugin “turns de-essing from a tedious chore into fast progress'. The plugin (VST/AU) automatically applies highly-effective automatic de-essing to any sound you might put in its path.

smart:deess has been trained to detect nine types of phonemes, all pinpointing the sibilant and plosive sounds, usually present in the human voice. However, the algorithm can also apply its spectral processing to other audio as well. This plugin is just one of many from sonible’s new AI-driven smart range, which you can explore at your own leisure from their website.

XLN Audio’s Life lets you create beats from your most cherished memories

That subheading might sound a little unclear, or perhaps like something of science-fiction. However, with the implementation of AI, XLN Audio has invented a highly innovative tool to make it a reality. Life allows you to import audio taken from your favourite camera-roll memories (or elsewhere) and create enjoyable music out of it.

You can also download the Life Field Recorder app on your phone and record live audio for instant use, or load up the Life DAW recorder for the same function inside of your favourite music production software on PC/MAC. Feel free to check out some of the demos XLN Audio have provided on their Life webpage to see a true demonstration of what this AI-driven software is capable of.

Mawf

Mawf is another entry on this list which has a high potential usability across the board. This handy addition uses AI to morph (Mawf) incoming audio into a specified instrument type (for example a trumpet). So, yes… You can import a recording of your best friend snoring and the AI will attempt to mawf it into a usable, pitch-corrected instrument sample or MIDI pattern.

Perhaps a more likely application of this plugin would be to take a melody of a sample (say, an eight-bar synth loop) and transform it into a completely different instrument. With a whole host of modular parameters, you can instruct the AI to mawf the audio in varying ways, resulting in unique outcomes. Check out the official Mawf webpage for a more in-depth breakdown.

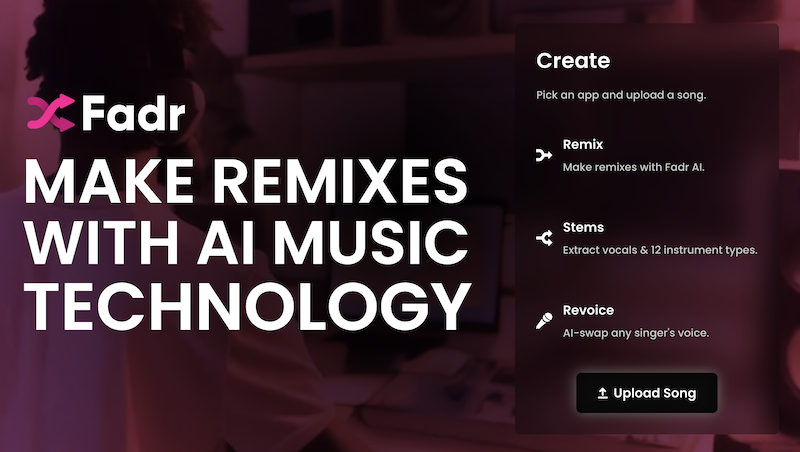

InstaComposer 2 effortlessly generates in-key MIDI data

The potential creative applications for AI in music production are widespread, but perhaps one of the most handy uses is when it comes to MIDI generation. InstaComposer 2 is a tool that allows, even those with minimal understanding of music theory to generate in-key melodies and chord MIDI data for use, either inside of the InstaComposer interface, or transferred directly to your DAW for use with different digital instruments.

If our aim is to utilise AI tools for a more efficient workflow across the board, it’s important to highlight innovations that will likely find frequent use in your toolkit. Having the capability to effortlessly generate complementary MIDI patterns that are ensured to harmonise well with your other elements, is a surely valuable asset. Even if you’re well-versed in music theory, the time saved by using a tool, such as InstaComposer makes it a stand-out candidate amongst recent AI releases.

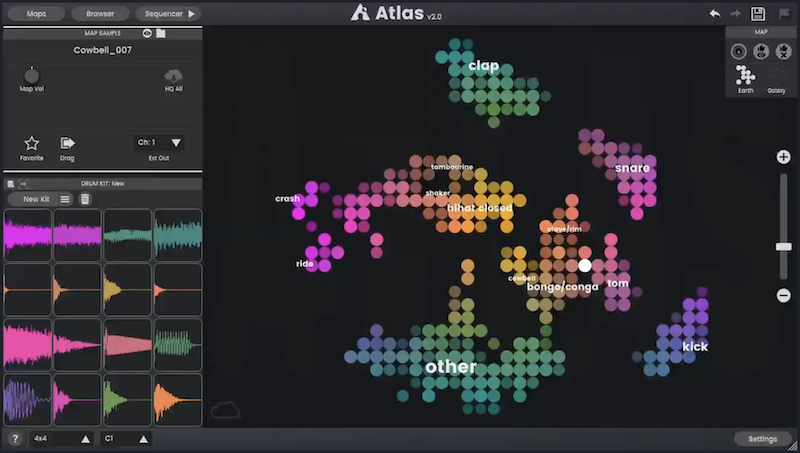

Algonaut Atlas 2 organises samples in newfound way

One of the more innovative releases we’ve seen incorporating artificial intelligence is found within Algonaut’s Atlas 2. On the surface, Atlas is just a fancy sample library organiser. However, with added AI benefits, a drum sequencer, the ability to manipulate and chop samples, with an intuitive, visually-appealing design, this plugin is sure to nest its way into some creators’ lists of go-to tools.

See your samples mapped out in groups across a neat visual spectrum (that looks like an atlas – hence the name) and use the built-in AI to filter complimentary samples together unlike any other organiser. The software also has the added benefit of integrating with a wide range of hardware, so if you combine this with your favourite drum pad MIDI controller, you’ll be pumping out beats at a newfound haste.

Baby Audio TAIP: An AI generated tape emulation

Once you head down the rabbit hole of tape saturation, you can tend to become a bit obsessive, and wonder why you hadn’t started applying it to your tracks sooner. To add to your tape-crazed addiction, Baby Audio have pioneered a fresh approach to digital tape emulation by utilising AI technology in their plugin, TAIP’s design.

What makes this software stand out is how Baby Audio went about building it. They fed a dedicated AI neural network algorithm a bunch of different dry and wet (with analog tape processing) audio, making the computer decipher the exact harmonic differences between the two. This makes for a highly accurate digital emulation, and the creators have loaded the plugin with a number of different tape presets.

FAQs

Can AI replace music producers?

It seems unlikely. The AI revolution is creating tools which are helping producers push the boundaries of creativity and innovation.

Can AI write a good song?

Yes, it does this by analysing and mimicking sonic traits from songs. This means that AI lacks the ability to innovate new sounds and genres.

Can AI master a song?

AI mastering platforms have been around in the music industry for years, with many services claiming to have professional level mastering through AI learning.